Preface

We have recently been porting some of our products and prototypes made in Unity to the HTC Vive Focus, which is the new standalone headset from HTC, this blog post is a little bit of our insight, opinions and tips from this.

This blog post is not a tutorial, but more of a discussion and thoughts about the HTC Vive Focus from a Unity developers point of view. I hope to shed light on a few bits that had me stumbling so other developers can avoid those bumps in the road.

First a little about me, my name is Tom, I am a senior developer at Future Visual, lover of new technology and its applications. I have been working with VR headsets since the Oculus DK1 and have created many VR training applications in my 6 years of being in the industry.

The headset

The [HTC Vive] Focus is an interesting addition to the VR world, it removes the wires that usually attach to premium headsets while keeping the 6 degrees of freedom (6DOF), without the need for any tracking externally, at the cost of the raw power of a full desktop computer. I’ve found the performance decent enough, you could certainly push some pretty nice scenes out of it if you designed and optimised them well.

The ergonomics of the headset have to be noted as being very comfortable, though the strap was hard to figure out at first, when it is properly adjusted the headset sits very comfortably on your head. Personally I really like the look of it, definitely a step up from the blocky screen-in-front-of-your-face designs seen in a lot of premium headsets.

One of the most surprising features I found on the headset was the inside-out tracking and how it just worked as soon as the headset was put on. The only other inside-out tracking headset I have tried is Acer’s Windows Mixed Reality headset and the Focus definitely was much quicker at tracking 6DOF movement with just as much fidelity.

The lack of 6DOF in the controller however is a bit of a disappointment, as that would really make it feel like a premium headset gone wireless. But HTC have said the the controller could be used as a 6DOF one recently at a conference in China. With that aside, the controller is still a functional tool for interacting with the VR user interface, if a bit unwieldy at times.

Developing for the headset

This information is correct as of 7/06/2018. With VR, things can change rapidly.

At its most basic, the Focus is an Android phone, you plug it into your computer like one, you deploy to it like one and you can access like one (with the Android SDK’s Android Debug Bridge (adb) tool). I found it easier to set up adb to work wirelessly with the headset, so I can simply put the headset on and off without having to plug a cable in and out. (there’s a answer on how to do this here)

Unity

The Vive Focus is used in Unity through a SDK packaged called Wave VR, this is HTC’s mobile VR package that looks like it supports more than just Focus for mobile VR purposes. There’s is a Unity section in the documentation for it that has a section called ‘Unity Plugin Getting Started’ that is definitely required reading. It will tell you that you needed:

- Android SDK 7.1.1 (API level 25 or higher)

- Android SDK Tools version 25 or higher

- Unity Android Package

- With the Android SDK and the JDK set up

- Wave VR Unity SDK (it’s within the main Wave VR SDK download)

One of the strangest bits I had to do in Unity is making sure that VR/XR Enabled was turned off in the Player Settings, I guess this is because Unity’s own XR integration can interfere with the Wave VR integration.

When you’ve imported the SDK, it helpfully comes up with a menu suggesting a few things, I’d suggest accepting them all.

The Basics

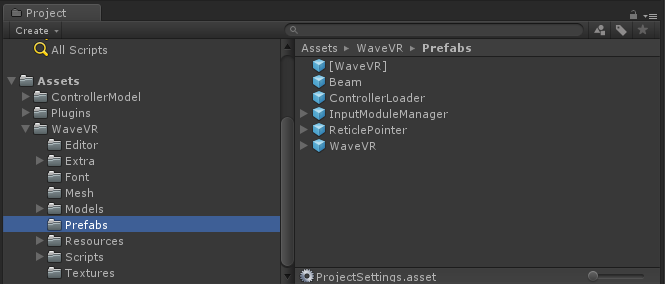

The basic prefabs you need to get head movement in a scene in Unity is the ‘WaveVR’ prefab, don’t get this confused with the ‘[WaveVR]’ prefab, which as far as I can tell, simply initialises the systems and doesn’t set up any cameras. The ‘WaveVR’ prefab contains a camera called “head” which has all the scripts you need for basic head tracking.

The Controller

To see the Focus’ controllers you’ll need either the ‘ControllerLoader’ prefab, which will dynamically load in the controllers when they connect (I assume this is for the other headsets/controllers the WaveVR SDK supports), or if you want the Focus controller already in the scene (like we did) then you need to select the correct controller prefab. This is a bit confusing as they all seem to have a sort of code name associated with them, but if you look at the actual controller model, you can deduce the the ‘Finch’ controller is the same look as the Focus’ one. The individual controller prefabs are under their respective folder in the ‘ControllerModel’ folder from the SDK. They’re placed in resources because the ‘ControllerLoader’ loads them from their dynamically, but you can just drag them into the scene instead preemptively.

I found that if you don’t parent the controller under the ‘WaveVR’ prefab, then when you move the head away, the controller stays in the same positions (at least with the Virtual Reality Toolkits (VRTK) teleporting). So parent the ‘WVR_CONTROLLER_FINCH3DOF_1_0_MC_R’ (use the right one, thats what WaveVR assumes is the first and only controller) under the ‘WaveVR’ game object to get the desired controller-attached-to-head tracking.

To access the inputs from the controller you need to access the ‘WaveVR_Controller’ class statically and with the ‘Input’ function get the desired device class (using the ‘WVR_DeviceType enum), then you can get all sorts of inputs from the controller. One thing to note is that the button on the end, that you may think of as a ‘trigger’, is actually a ‘bumper’ in the terms of this SDK.

Testing

When it came to testing our apps, we found it pretty much as straightforward as testing a usual Android app, however, as there is a lack of touchscreen and right now there isn’t a quick way of accessing a currently running apps properties, I’d suggest adding a way to quit your app inside the app itself. Otherwise you have to go through two sets of settings menus to the list of apps, to ‘Force Stop’ the app to actually restart it.

Closing thoughts

I rather enjoyed developing for the HTC Vive Focus, it was pretty easy in the end (once all the tricks and quirks were figured out) to iterate and improve things. The wireless nature of it definitely gives it a free feeling you rarely get with a lot of premium headsets and as a developer in a small studio, with plenty of a other developers in VR headsets, it’s nice to just stand off to one side and test something, rather than bumping into your colleagues awkwardly!

I certainly am looking forward to trying a 6DOF controller with it and whatever future updates to the headset will bring, as HTC seem quite keen to iterate, update and listen to developers (and the community seems quite active too).

Preface

We have recently been porting some of our products and prototypes made in Unity to the HTC Vive Focus, which is the new standalone headset from HTC, this blog post is a little bit of our insight, opinions and tips from this.

This blog post is not a tutorial, but more of a discussion and thoughts about the HTC Vive Focus from a Unity developers point of view. I hope to shed light on a few bits that had me stumbling so other developers can avoid those bumps in the road.

First a little about me, my name is Tom, I am a senior developer at Future Visual, lover of new technology and its applications. I have been working with VR headsets since the Oculus DK1 and have created many VR training applications in my 6 years of being in the industry.

The headset

The [HTC Vive] Focus is an interesting addition to the VR world, it removes the wires that usually attach to premium headsets while keeping the 6 degrees of freedom (6DOF), without the need for any tracking externally, at the cost of the raw power of a full desktop computer. I’ve found the performance decent enough, you could certainly push some pretty nice scenes out of it if you designed and optimised them well.

The ergonomics of the headset have to be noted as being very comfortable, though the strap was hard to figure out at first, when it is properly adjusted the headset sits very comfortably on your head. Personally I really like the look of it, definitely a step up from the blocky screen-in-front-of-your-face designs seen in a lot of premium headsets.

One of the most surprising features I found on the headset was the inside-out tracking and how it just worked as soon as the headset was put on. The only other inside-out tracking headset I have tried is Acer’s Windows Mixed Reality headset and the Focus definitely was much quicker at tracking 6DOF movement with just as much fidelity.

The lack of 6DOF in the controller however is a bit of a disappointment, as that would really make it feel like a premium headset gone wireless. But HTC have said the the controller could be used as a 6DOF one recently at a conference in China. With that aside, the controller is still a functional tool for interacting with the VR user interface, if a bit unwieldy at times.

Developing for the headset

This information is correct as of 7/06/2018. With VR, things can change rapidly.

At its most basic, the Focus is an Android phone, you plug it into your computer like one, you deploy to it like one and you can access like one (with the Android SDK’s Android Debug Bridge (adb) tool). I found it easier to set up adb to work wirelessly with the headset, so I can simply put the headset on and off without having to plug a cable in and out. (there’s a answer on how to do this here)

Unity

The Vive Focus is used in Unity through a SDK packaged called Wave VR, this is HTC’s mobile VR package that looks like it supports more than just Focus for mobile VR purposes. There’s is a Unity section in the documentation for it that has a section called ‘Unity Plugin Getting Started’ that is definitely required reading. It will tell you that you needed:

- Android SDK 7.1.1 (API level 25 or higher)

- Android SDK Tools version 25 or higher

- Unity Android Package

- With the Android SDK and the JDK set up

- Wave VR Unity SDK (it’s within the main Wave VR SDK download)

One of the strangest bits I had to do in Unity is making sure that VR/XR Enabled was turned off in the Player Settings, I guess this is because Unity’s own XR integration can interfere with the Wave VR integration.

When you’ve imported the SDK, it helpfully comes up with a menu suggesting a few things, I’d suggest accepting them all.

The Basics

The basic prefabs you need to get head movement in a scene in Unity is the ‘WaveVR’ prefab, don’t get this confused with the ‘[WaveVR]’ prefab, which as far as I can tell, simply initialises the systems and doesn’t set up any cameras. The ‘WaveVR’ prefab contains a camera called “head” which has all the scripts you need for basic head tracking.

The Controller

To see the Focus’ controllers you’ll need either the ‘ControllerLoader’ prefab, which will dynamically load in the controllers when they connect (I assume this is for the other headsets/controllers the WaveVR SDK supports), or if you want the Focus controller already in the scene (like we did) then you need to select the correct controller prefab. This is a bit confusing as they all seem to have a sort of code name associated with them, but if you look at the actual controller model, you can deduce the the ‘Finch’ controller is the same look as the Focus’ one. The individual controller prefabs are under their respective folder in the ‘ControllerModel’ folder from the SDK. They’re placed in resources because the ‘ControllerLoader’ loads them from their dynamically, but you can just drag them into the scene instead preemptively.

I found that if you don’t parent the controller under the ‘WaveVR’ prefab, then when you move the head away, the controller stays in the same positions (at least with the Virtual Reality Toolkits (VRTK) teleporting). So parent the ‘WVR_CONTROLLER_FINCH3DOF_1_0_MC_R’ (use the right one, thats what WaveVR assumes is the first and only controller) under the ‘WaveVR’ game object to get the desired controller-attached-to-head tracking.

To access the inputs from the controller you need to access the ‘WaveVR_Controller’ class statically and with the ‘Input’ function get the desired device class (using the ‘WVR_DeviceType enum), then you can get all sorts of inputs from the controller. One thing to note is that the button on the end, that you may think of as a ‘trigger’, is actually a ‘bumper’ in the terms of this SDK.

Testing

When it came to testing our apps, we found it pretty much as straightforward as testing a usual Android app, however, as there is a lack of touchscreen and right now there isn’t a quick way of accessing a currently running apps properties, I’d suggest adding a way to quit your app inside the app itself. Otherwise you have to go through two sets of settings menus to the list of apps, to ‘Force Stop’ the app to actually restart it.

Closing thoughts

I rather enjoyed developing for the HTC Vive Focus, it was pretty easy in the end (once all the tricks and quirks were figured out) to iterate and improve things. The wireless nature of it definitely gives it a free feeling you rarely get with a lot of premium headsets and as a developer in a small studio, with plenty of a other developers in VR headsets, it’s nice to just stand off to one side and test something, rather than bumping into your colleagues awkwardly!

I certainly am looking forward to trying a 6DOF controller with it and whatever future updates to the headset will bring, as HTC seem quite keen to iterate, update and listen to developers (and the community seems quite active too).