Integrating #ARKit and multiplayer #VR using #unity3d:https://t.co/1VGtmFbTBt

So much potential here!— Future Visual (@FutureVisualVR) July 17, 2017

Augmented Reality has taken a huge step forward thanks to Apple’s release of ARKit in iOS 11. While we’ve used technology such as Vuforia and ARToolkit for AR in previous projects, ARKit provides a native framework for very stable SLAM tracking.

At Future Visual we’re always experimenting with the latest technology, and are committed to producing the best possible realtime experiences. With the release of ARKit, we just had to see what was possible.

As we have a focus on multi-user VR we wondered how we could fuse ARKit and VR to make a demo with multiple users interacting together. While we’ve experimented with Mixed Reality before using green-screen, we wanted to see what was possible with ARKit.

We have our own internal framework for multi-user VR, and we easily adapted this for ARKit. A few days of experimentation later our demo was born!

What does this proof of concept show?

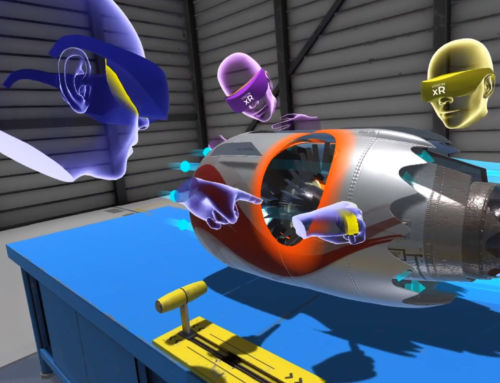

Our demo shows how a user with an iPad can place the environment the VR users are in, onto a surface in the real world, walk around the world to view it from any angle to see what the VR users are doing, and to interact with the scene and users in VR. This creates an Augmented Reality / Mixed Reality window into the VR world.

This could be useful for applications where an observer needs to see exactly what someone is doing in VR and make changes to the virtual world – or just for fun! It could easily be adapted to allow the iPad user to be at a remote location.

How did we make this?

Without going into too many details, the main components of the project were:

Want to find out more?

Just get in contact!

Integrating #ARKit and multiplayer #VR using #unity3d:https://t.co/1VGtmFbTBt

So much potential here!— Future Visual (@FutureVisualVR) July 17, 2017

Augmented Reality has taken a huge step forward thanks to Apple’s release of ARKit in iOS 11. While we’ve used technology such as Vuforia and ARToolkit for AR in previous projects, ARKit provides a native framework for very stable SLAM tracking.

At Future Visual we’re always experimenting with the latest technology, and are committed to producing the best possible realtime experiences. With the release of ARKit, we just had to see what was possible.

As we have a focus on multi-user VR we wondered how we could fuse ARKit and VR to make a demo with multiple users interacting together. While we’ve experimented with Mixed Reality before using green-screen, we wanted to see what was possible with ARKit.

We have our own internal framework for multi-user VR, and we easily adapted this for ARKit. A few days of experimentation later our demo was born!

What does this proof of concept show?

Our demo shows how a user with an iPad can place the environment the VR users are in, onto a surface in the real world, walk around the world to view it from any angle to see what the VR users are doing, and to interact with the scene and users in VR. This creates an Augmented Reality / Mixed Reality window into the VR world.

This could be useful for applications where an observer needs to see exactly what someone is doing in VR and make changes to the virtual world – or just for fun! It could easily be adapted to allow the iPad user to be at a remote location.

How did we make this?

Without going into too many details, the main components of the project were:

Want to find out more?

Just get in contact!